No products in the basket.

On May 5th, Abertay University in Dundee, Scotland celebrated another amazing year of graduates with its annual Abertay Digital Graduate Show. This year, as it celebrated its 20 Years of Games, the University’s events extended across 5 days, encompassing every floor of their student centre and including more than 170 final projects from students along with celebrity alumni. Kicking off the festivities was a fantastic Awards Show for the Sound and Music for Games programme, showcasing the extraordinary work completed by current students over the past year.

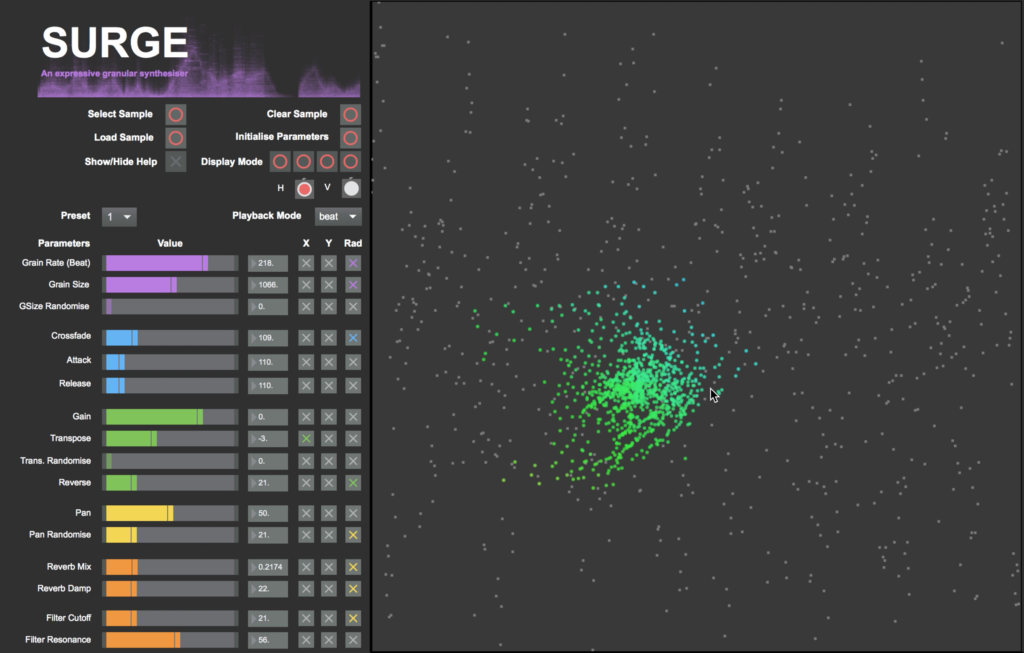

One of those winners, Paul Drauz-Brown is here with us today. Winner of the Most Innovative Audio Design Award, his creation of a new type of granular synthesiser called ‘Surge’ is extremely exceptional and quite innovative, something that quickly captured our attention.

Hey, Paul. It’s great to be sitting down with you. How about we start with a bit of your background. How did you get interested in sound design?

I’ve always been captivated by effective design. When I finished school, I decided to study Mechanical Engineering, but found it too dry. I was always more interested in the emotional side of design, and with music being a constant passion, it seemed like a good idea to combine them. I started out in a course on audio production, before deciding to come to Abertay to pursue a career in game audio. Sound has always played a large part in defining experiences for me, so learning to create it myself was a very appealing prospect.

Well, it sounds like a good idea that you switched – you’re doing some great work. Tell us more about ‘Surge’. What inspired you to make it?

The project is an attempt to bring a more intuitive and natural feel to a digital instrument, in this case, a granular synthesiser. I was always quite frustrated by fiddling around with imaginary knobs on a screen, because I find it more difficult to express myself and feel connected to the instrument. So, I began to consider new ways of controlling these parameters.

In the very initial stages it was thinking in terms of the energy of music, as I’ve always imagined it to move like water. So, I began to piece together ideas for a procedural music system controlled by a fluid simulation, but soon realised I’d need a lot more time than I had to do it justice. By retaining the same idea of emergent movement, through the use of the Boids Flocking Algorithm, I was able to recycle some of these concepts. I combined this with the “CataRT” concatenative synthesiser by Diemo Schwarz, in Max MSP, to create this project I named “Surge”.

It’s all really impressive, but did you have any bumps along the way? Were there any challenges in particular that stand out?

Initially, it was understanding how to customise CataRT for my purposes, as it’s an enormous and complicated patch. By the time I’d finished my first prototype, the whole thing was quite buggy with all the meddling I’d done. I decided to rebuild it from a new version of CataRT once I understood it properly, which streamlined the finished prototype immensely. I’m still having issues with latency and refresh rates, which I haven’t yet managed to fix, so I plan to redesign it from first principals in the future. CataRT is very powerful, but not optimised for my purposes, so I’ll start from scratch to make something I can publish myself cross-platform.

Did you have any help making Surge? What has been the overall reaction to it?

My tutor, Christos Michalakos, introduced me to both CataRT and Boids after my initial idea proved unfeasible, so I have to give tremendous thanks to him. All the development was done by myself, but his input was always very valuable, not only in troubleshooting,but also in brainstorming new ideas for the future of the build.

Feedback has been great! Although many people are not very familiar with granular synthesis, especially in this form, it was clear that this new method of parameter control made the process more intuitive for most of them. I’ve received a lot of input on possible ways of iterating on this idea of user-generated movement, so I’m very excited to get experimenting further.

Now that the year is done, do you have any plans to continue developing Surge further?

Now that the year is done, do you have any plans to continue developing Surge further?

Absolutely, as I say, I’ll likely do a ground-up redesign. It would allow me to really optimise the whole thing, and would be far more straightforward to compile for different platforms, such as tablets. This project has been a fantastic introduction to some new methods of sound generation, and I’m now looking into other possibilities, such as utilising neural networks. Central to the whole project was this idea of ‘emergence’, which refers to emergent patterns described by Chaos Theory. By utilising ‘strange attractors’ it’s possible to have endlessly shifting patterns that all revolve around a central theme. This could make them very useful for designing novel procedural music systems which are less robotic. The Boids simulation is an example of such a self-organising system, with each particle only conforming to 3 simple rules, but kept in check by the self-referencing process the system undergoes. I’m looking to bring this type of semi-predictable behaviour to other digital systems, always thinking in terms of musicality.

Shifting gears, slightly. I’m curious – who (or what) inspires you to create work like this? Anyone or any game in particular?

I always find interactive experiences the most engaging, so work done for games always appeals to me. For example, the sound design that can be found in Alien: Isolation is incredible. While the AI monster is never too far away, the subtle changes in the atmosphere as the Alien draws near are subconsciously screaming at you to hide somewhere before it’s too late. I don’t think I’ve ever enjoyed being as stressed as I was for that entire playthrough.

My favourite experience so far, though, was playing through ‘Journey’, by That Game Studio. The sound design and foley is subtle, giving far more weight to the score. This score is so beautifully composed, that it replaces the many of the dietetic elements of the game, so the music seems to arise from the environment itself. This, and so many other things, culminate in a truly magical game that I would recommend to anyone in a heartbeat. In the same vein, my goal in any project is always to try and reconcile SFX and music, having them play off each other to create a more emotional and believable world.

Now, for the future. What are your goals after Abertay?

I’m planning to take a Masters course in Games Development at Abertay, tailored for audio, which will give me more opportunities to collaborate on some larger projects. It’s also the perfect time to take the lessons learned and inspiration from this, my dissertation project, and expand on them in my thesis. Hopefully, this summer I’ll also be one of six teams taking part in the new ‘Dare Academy’ program, where we’ll compete to produce a game within a few weeks. We’re currently waiting on the panel’s decision after our recent pitch. In any case, my future goal is to help push the boundaries of how sound can be used for expression and interactivity. It’s a thrilling time to be involved with this work, as the possibilities are vast. With games and VR swiftly becoming the most vibrant area of expression in media, I daresay this is where work towards the major milestones will come to fruition, and I’m very excited to be involved with the process.

We’d like to again congratulate Paul on his amazing work as well as the rest of the Abertay students. More about Paul and Surge can be found on his website: TetheredAudio. Information about Abertay’s Sound and Music for Games Programme can be found here.